Загрузка файла с помощью Wget

Команда загрузит один файл и сохранит его в текущем каталоге. Она также показывает ход загрузки, размер, дату и время загрузки.

. Версия Wget и справка

С помощью опций –version или –help вы можете просматривать версию и справку по мере необходимости.

$ wget --version

$ wget --help

2 invoking

By default, Wget is very simple to invoke. The basic syntax is:

Wget will simply download all the URLs specified on the command

line. URL is a Uniform Resource Locator, as defined below.

However, you may wish to change some of the default parameters of

Wget. You can do it two ways: permanently, adding the appropriate

command to .wgetrc (see Startup File), or specifying it on

the command line.

Загрузка файла с другим именем с помощью Wget

Используя опцию -O (в верхнем регистре), wget загружает файлы с указанным именем. Ниже показано как мы изменили имя файла wget2-2.0.0 на имя файла wget.zip.

1 URL Format

URL is an acronym for Uniform Resource Locator. A uniform

resource locator is a compact string representation for a resource

available via the Internet. Wget recognizes the URL syntax as per

RFC1738. This is the most widely used form (square brackets denote

optional parts):

10 FTPS Options

- ‘–ftps-implicit’

This option tells Wget to use FTPS implicitly. Implicit FTPS consists of initializing

SSL/TLS from the very beginning of the control connection. This option does not send

anAUTH TLScommand: it assumes the server speaks FTPS and directly starts an

SSL/TLS connection. If the attempt is successful, the session continues just like

regular FTPS (PBSZandPROTare sent, etc.).

Implicit FTPS is no longer a requirement for FTPS implementations, and thus

many servers may not support it. If ‘–ftps-implicit’ is passed and no explicit

port number specified, the default port for implicit FTPS, 990, will be used, instead

of the default port for the “normal” (explicit) FTPS which is the same as that of FTP,

21.- ‘–no-ftps-resume-ssl’

Do not resume the SSL/TLS session in the data channel. When starting a data connection,

Wget tries to resume the SSL/TLS session previously started in the control connection.

SSL/TLS session resumption avoids performing an entirely new handshake by reusing

the SSL/TLS parameters of a previous session. Typically, the FTPS servers want it that way,

so Wget does this by default. Under rare circumstances however, one might want to

start an entirely new SSL/TLS session in every data connection.

This is what ‘–no-ftps-resume-ssl’ is for.- ‘–ftps-clear-data-connection’

All the data connections will be in plain text. Only the control connection will be

under SSL/TLS. Wget will send aPROT Ccommand to achieve this, which must be

approved by the server.- ‘–ftps-fallback-to-ftp’

Fall back to FTP if FTPS is not supported by the target server. For security reasons,

this option is not asserted by default. The default behaviour is to exit with an error.

If a server does not successfully reply to the initialAUTH TLScommand, or in the

case of implicit FTPS, if the initial SSL/TLS connection attempt is rejected, it is

considered that such server does not support FTPS.

13 Exit Status

Wget may return one of several error codes if it encounters problems.

2 Option Syntax

Since Wget uses GNU getopt to process command-line arguments, every

option has a long form along with the short one. Long options are

more convenient to remember, but take time to type. You may freely

mix different option styles, or specify options after the command-line

arguments. Thus you may write:

The space between the option accepting an argument and the argument may

be omitted. Instead of ‘-o log’ you can write ‘-olog’.

You may put several options that do not require arguments together,

like:

This is completely equivalent to:

Since the options can be specified after the arguments, you may

terminate them with ‘–’. So the following will try to download

URL ‘-x’, reporting failure to log:

The options that accept comma-separated lists all respect the convention

that specifying an empty list clears its value. This can be useful to

clear the .wgetrc settings. For instance, if your .wgetrc

sets exclude_directories to /cgi-bin, the following

example will first reset it, and then set it to exclude /~nobody

and /~somebody. You can also clear the lists in .wgetrc

(see Wgetrc Syntax).

Most options that do not accept arguments are boolean options,

so named because their state can be captured with a yes-or-no

(“boolean”) variable. For example, ‘–follow-ftp’ tells Wget

to follow FTP links from HTML files and, on the other hand,

‘–no-glob’ tells it not to perform file globbing on FTP URLs.

Unless stated otherwise, it is assumed that the default behavior is

the opposite of what the option accomplishes. For example, the

documented existence of ‘–follow-ftp’ assumes that the default

is to not follow FTP links from HTML pages.

Affirmative options can be negated by prepending the ‘–no-’ to

the option name; negative options can be negated by omitting the

‘–no-’ prefix. This might seem superfluous—if the default for

an affirmative option is to not do something, then why provide a way

to explicitly turn it off?

But the startup file may in fact change

the default. For instance, using follow_ftp = on in

.wgetrc makes Wget follow FTP links by default, and

using ‘–no-follow-ftp’ is the only way to restore the factory

default from the command line.

3 Basic Startup Options

- ‘-V’

- ‘–version’

Display the version of Wget.

- ‘-h’

- ‘–help’

Print a help message describing all of Wget’s command-line options.

- ‘-b’

- ‘–background’

Go to background immediately after startup. If no output file is

specified via the ‘-o’, output is redirected to wget-log.- ‘-e command’

- ‘–execute command’

Execute command as if it were a part of .wgetrc

(see Startup File). A command thus invoked will be executed

after the commands in .wgetrc, thus taking precedence over

them. If you need to specify more than one wgetrc command, use multiple

instances of ‘-e’.

Wget Загрузка Нескольких Файлов Из одного Файла

Чтобы загрузить несколько файлов одновременно, используйте опцию -i с расположением файла, содержащего список URL-адресов, которые необходимо загрузить. Каждый URL-адрес необходимо добавить в отдельную строку, как показано ниже.

Например файл ‘download-linux.txt‘ файл содержит список URL-адресов, которые необходимо загрузить.

1 Spanning Hosts

Wget’s recursive retrieval normally refuses to visit hosts different

than the one you specified on the command line. This is a reasonable

default; without it, every retrieval would have the potential to turn

your Wget into a small version of google.

3 Directory-Based Limits

Regardless of other link-following facilities, it is often useful to

place the restriction of what files to retrieve based on the directories

those files are placed in. There can be many reasons for this—the

home pages may be organized in a reasonable directory structure; or some

directories may contain useless information, e.g. /cgi-bin or

/dev directories.

Wget offers three different options to deal with this requirement. Each

option description lists a short name, a long name, and the equivalent

command in .wgetrc.

4 Relative Links

When ‘-L’ is turned on, only the relative links are ever followed.

Relative links are here defined those that do not refer to the web

server root. For example, these links are relative:

These links are not relative:

Using this option guarantees that recursive retrieval will not span

hosts, even without ‘-H’. In simple cases it also allows downloads

to “just work” without having to convert links.

This option is probably not very useful and might be removed in a future

release.

5 Following FTP Links

The rules for FTP are somewhat specific, as it is necessary for

them to be. FTP links in HTML documents are often included

for purposes of reference, and it is often inconvenient to download them

by default.

5 time-stamping

One of the most important aspects of mirroring information from the

Internet is updating your archives.

Возобновление загрузки через Wget

В случае загрузки большого файла иногда может произойти сбой загрузки. В этом случае мы можем возобновить загрузку того же файла с того места, где он был прерван с помощью опции -c.

Но если вы начнете загружать файлы без указания опции -c, wget добавит расширение .1 в конце файла, что будет считаться новой загрузкой. Поэтому рекомендуется добавлять опцию -c при загрузке больших файлов.

1 Time-Stamping Usage

The usage of time-stamping is simple. Say you would like to download a

file so that it keeps its date of modification.

6 startup file

Once you know how to change default settings of Wget through command

line arguments, you may wish to make some of those settings permanent.

You can do that in a convenient way by creating the Wget startup

file—.wgetrc.

Besides .wgetrc is the “main” initialization file, it is

convenient to have a special facility for storing passwords. Thus Wget

reads and interprets the contents of $HOME/.netrc, if it finds

it. You can find .netrc format in your system manuals.

Wget reads .wgetrc upon startup, recognizing a limited set of

commands.

1 Wgetrc Location

When initializing, Wget will look for a global startup file,

/usr/local/etc/wgetrc by default (or some prefix other than

/usr/local, if Wget was not installed there) and read commands

from there, if it exists.

2 Wgetrc Syntax

The syntax of a wgetrc command is simple:

The variable will also be called command. Valid

values are different for different commands.

The commands are case-, underscore- and minus-insensitive. Thus

‘DIr__PrefiX’, ‘DIr-PrefiX’ and ‘dirprefix’ are the same.

Empty lines, lines beginning with ‘#’ and lines containing white-space

only are discarded.

Commands that expect a comma-separated list will clear the list on an

empty command. So, if you wish to reset the rejection list specified in

global wgetrc, you can do it with:

3 Wgetrc Commands

The complete set of commands is listed below. Legal values are listed

after the ‘=’. Simple Boolean values can be set or unset using

‘on’ and ‘off’ or ‘1’ and ‘0’.

Some commands take pseudo-arbitrary values. address values can be

hostnames or dotted-quad IP addresses. n can be any positive

integer, or ‘inf’ for infinity, where appropriate. string

values can be any non-empty string.

Most of these commands have direct command-line equivalents. Also, any

wgetrc command can be specified on the command line using the

‘–execute’ switch (see Basic Startup Options.)

4 Sample Wgetrc

This is the sample initialization file, as given in the distribution.

It is divided in two section—one for global usage (suitable for global

startup file), and one for local usage (suitable for

$HOME/.wgetrc). Be careful about the things you change.

Note that almost all the lines are commented out. For a command to have

any effect, you must remove the ‘#’ character at the beginning of

its line.

### ### Sample Wget initialization file .wgetrc ### ## You can use this file to change the default behaviour of wget or to ## avoid having to type many many command-line options. This file does ## not contain a comprehensive list of commands -- look at the manual ## to find out what you can put into this file. You can find this here: ## $ info wget.info 'Startup File' ## Or online here: ## https://www.gnu.org/software/wget/manual/wget.html#Startup-File ## ## Wget initialization file can reside in /usr/local/etc/wgetrc ## (global, for all users) or $HOME/.wgetrc (for a single user). ## ## To use the settings in this file, you will have to uncomment them, ## as well as change them, in most cases, as the values on the ## commented-out lines are the default values (e.g. "off"). ## ## Command are case-, underscore- and minus-insensitive. ## For example ftp_proxy, ftp-proxy and ftpproxy are the same. ## ## Global settings (useful for setting up in /usr/local/etc/wgetrc). ## Think well before you change them, since they may reduce wget's ## functionality, and make it behave contrary to the documentation: ## # You can set retrieve quota for beginners by specifying a value # optionally followed by 'K' (kilobytes) or 'M' (megabytes). The # default quota is unlimited. #quota = inf # You can lower (or raise) the default number of retries when # downloading a file (default is 20). #tries = 20 # Lowering the maximum depth of the recursive retrieval is handy to # prevent newbies from going too "deep" when they unwittingly start # the recursive retrieval. The default is 5. #reclevel = 5 # By default Wget uses "passive FTP" transfer where the client # initiates the data connection to the server rather than the other # way around. That is required on systems behind NAT where the client # computer cannot be easily reached from the Internet. However, some # firewalls software explicitly supports active FTP and in fact has # problems supporting passive transfer. If you are in such # environment, use "passive_ftp = off" to revert to active FTP. #passive_ftp = off # The "wait" command below makes Wget wait between every connection. # If, instead, you want Wget to wait only between retries of failed # downloads, set waitretry to maximum number of seconds to wait (Wget # will use "linear backoff", waiting 1 second after the first failure # on a file, 2 seconds after the second failure, etc. up to this max). #waitretry = 10 ## ## Local settings (for a user to set in his $HOME/.wgetrc). It is ## *highly* undesirable to put these settings in the global file, since ## they are potentially dangerous to "normal" users. ## ## Even when setting up your own ~/.wgetrc, you should know what you ## are doing before doing so. ## # Set this to on to use timestamping by default: #timestamping = off # It is a good idea to make Wget send your email address in a `From:' # header with your request (so that server administrators can contact # you in case of errors). Wget does *not* send `From:' by default. #header = From: Your Name <[email protected]> # You can set up other headers, like Accept-Language. Accept-Language # is *not* sent by default. #header = Accept-Language: en # You can set the default proxies for Wget to use for http, https, and ftp. # They will override the value in the environment. #https_proxy = http://proxy.yoyodyne.com:18023/ #http_proxy = http://proxy.yoyodyne.com:18023/ #ftp_proxy = http://proxy.yoyodyne.com:18023/ # If you do not want to use proxy at all, set this to off. #use_proxy = on # You can customize the retrieval outlook. Valid options are default, # binary, mega and micro. #dot_style = default # Setting this to off makes Wget not download /robots.txt. Be sure to # know *exactly* what /robots.txt is and how it is used before changing # the default! #robots = on # It can be useful to make Wget wait between connections. Set this to # the number of seconds you want Wget to wait. #wait = 0 # You can force creating directory structure, even if a single is being # retrieved, by setting this to on. #dirstruct = off # You can turn on recursive retrieving by default (don't do this if # you are not sure you know what it means) by setting this to on. #recursive = off # To always back up file X as X.orig before converting its links (due # to -k / --convert-links / convert_links = on having been specified), # set this variable to on: #backup_converted = off # To have Wget follow FTP links from HTML files by default, set this # to on: #follow_ftp = off # To try ipv6 addresses first: #prefer-family = IPv6 # Set default IRI support state #iri = off # Force the default system encoding #localencoding = UTF-8 # Force the default remote server encoding #remoteencoding = UTF-8 # Turn on to prevent following non-HTTPS links when in recursive mode #httpsonly = off # Tune HTTPS security (auto, SSLv2, SSLv3, TLSv1, PFS) #secureprotocol = auto

7 examples

The examples are divided into three sections loosely based on their

complexity.

Wget Загрузка файлов в фоновом режиме

С помощью опции -b вы можете загружать файлы в фоновом режиме сразу после начала загрузки, а лог загрузки записывается в файл wget.log.

1 Simple Usage

- Say you want to download a URL. Just type:

- But what will happen if the connection is slow, and the file is lengthy?

The connection will probably fail before the whole file is retrieved,

more than once. In this case, Wget will try getting the file until it

either gets the whole of it, or exceeds the default number of retries

(this being 20). It is easy to change the number of tries to 45, to

insure that the whole file will arrive safely: - Now let’s leave Wget to work in the background, and write its progress

to log file log. It is tiring to type ‘–tries’, so we

shall use ‘-t’.The ampersand at the end of the line makes sure that Wget works in the

background. To unlimit the number of retries, use ‘-t inf’. - The usage of FTP is as simple. Wget will take care of login and

password. - If you specify a directory, Wget will retrieve the directory listing,

parse it and convert it to HTML. Try:

8 various

This chapter contains all the stuff that could not fit anywhere else.

Установка Ограничения Скорости Загрузки Файлов Wget

С опцией –limit-rate=100k можно установить ограничение скорости загрузки файла в 100 кб, логи будут создаваться в файле wget.log, как показано ниже.

5 Internet Relay Chat

In addition to the mailinglists, we also have a support channel set up

via IRC at irc.freenode.org, #wget. Come check it out!

7 Portability

Like all GNU software, Wget works on the GNU system. However, since it

uses GNU Autoconf for building and configuring, and mostly avoids using

“special” features of any particular Unix, it should compile (and

work) on all common Unix flavors.

Various Wget versions have been compiled and tested under many kinds of

Unix systems, including GNU/Linux, Solaris, SunOS 4.x, Mac OS X, OSF

(aka Digital Unix or Tru64), Ultrix, *BSD, IRIX, AIX, and others. Some

of those systems are no longer in widespread use and may not be able to

support recent versions of Wget. If Wget fails to compile on your

system, we would like to know about it.

Thanks to kind contributors, this version of Wget compiles and works

on 32-bit Microsoft Windows platforms. It has been compiled

successfully using MS Visual C 6.0, Watcom, Borland C, and GCC

compilers. Naturally, it is crippled of some features available on

Unix, but it should work as a substitute for people stuck with

Windows.

Note that Windows-specific portions of Wget are not

guaranteed to be supported in the future, although this has been the

case in practice for many years now. All questions and problems in

Windows usage should be reported to Wget mailing list at

[email protected] where the volunteers who maintain the

Windows-related features might look at them.

8 Signals

Since the purpose of Wget is background work, it catches the hangup

signal (SIGHUP) and ignores it. If the output was on standard

output, it will be redirected to a file named wget-log.

Otherwise, SIGHUP is ignored. This is convenient when you wish

to redirect the output of Wget after having started it.

Other than that, Wget will not try to interfere with signals in any way.

C-c, kill -TERM and kill -KILL should kill it alike.

2 Security Considerations

When using Wget, you must be aware that it sends unencrypted passwords

through the network, which may present a security problem. Here are the

main issues, and some solutions.

- The passwords on the command line are visible using

ps. The best

way around it is to usewget -i -and feed the URLs to

Wget’s standard input, each on a separate line, terminated by C-d.

Another workaround is to use .netrc to store passwords; however,

storing unencrypted passwords is also considered a security risk. - Using the insecure basic authentication scheme, unencrypted

passwords are transmitted through the network routers and gateways. - The FTP passwords are also in no way encrypted. There is no good

solution for this at the moment. - Although the “normal” output of Wget tries to hide the passwords,

debugging logs show them, in all forms. This problem is avoided by

being careful when you send debug logs (yes, even when you send them to

me).

3 Contributors

GNU Wget was written by Hrvoje Nikšić [email protected],

However, the development of Wget could never have gone as far as it has, were

it not for the help of many people, either with bug reports, feature proposals,

patches, or letters saying “Thanks!”.

Special thanks goes to the following people (no particular order):

A.1 gnu free documentation license

Version 1.3, 3 November 2008

Obsolete lists

Previously, the mailing list [email protected] was used as the

main discussion list, and another list,

[email protected] was used for submitting and

discussing patches to GNU Wget.

Messages from [email protected] are archived at

Messages from [email protected] are archived at

Wget 1.21.1-dirty

This file documents the GNU Wget utility for downloading network

data.

Автоматическая авторизация

Настроив автоматическую авторизацию, можно сэкономить немного времени на вводе данных. Для этого в корневой папке вашего пользователя нужно создать файл .netrc

touch ~/.netrc chmod 600 ~/.netrc

Возможности

После авторизации откроется интерфейс для работы с хранилищем. Синтаксис команд напоминает стандартные команды Linux:

Загрузка файлов

Загрузить файл filename на удалённое хранилище в условную папку backup/ можно с помощью следующей команды:

curl -T filename -u ftp_login:ftp_password ftp://ftp.storage.address/backup/

Если каталога backup/, в который нужно загрузить файл, ещё не существует, можно добавить к команде опцию –ftp-create-dirs Тогда cURL автоматически создаст в хранилище недостающие директории и загрузит туда файл.

Загрузка файлов с wget

wget — базовая утилита загрузки файлов. Доступна по умолчанию в любом дистрибутиве семейства Linux.

Подключение

В отличие от клиента ftp, cURL не обеспечивает отдельный интерфейс для работы. Вместо этого он единовременно подключается к хранилищу, авторизуется, запрашивает данные и отключается, выводя требуемую информацию.

Подключиться к хранилищу можно двумя способами:

Указав авторизационные данные прямо в команде. Это быстро и удобно, но небезопасно — использованный вами пароль можно подсмотреть в истории команд.

curl -u ftp_login:ftp_password ftp://ftp.storage.address

Указав авторизационные данные в файле

.netrcТогда для работы в команде будет достаточно указать только адрес хранилища:curl -n ftp://ftp.storage.address

В обоих примерах cURL выведет в терминал список содержимого корневой папки хранилища. Аналогично можно вывести содержимое отдельных директорий хранилища, просто дописав путь после адреса FTP-сервера:

curl -n ftp://ftp.storage.address/backup/

Подключение и отключение

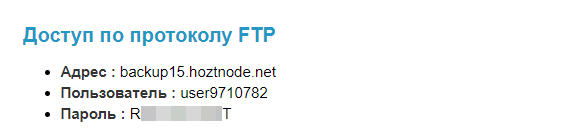

Для работы с внешним FTP-диском нам потребуются:

Если вы используете Диск для бэкапов, эти данные доступа можно найти в Личном кабинете — раздел Товары — Внешний диск для бэкапов. Выберите ваш диск в списке и сверху нажмите «Инструкция». В разделе «Доступ по протоколу FTP» будут указаны нужные данные:

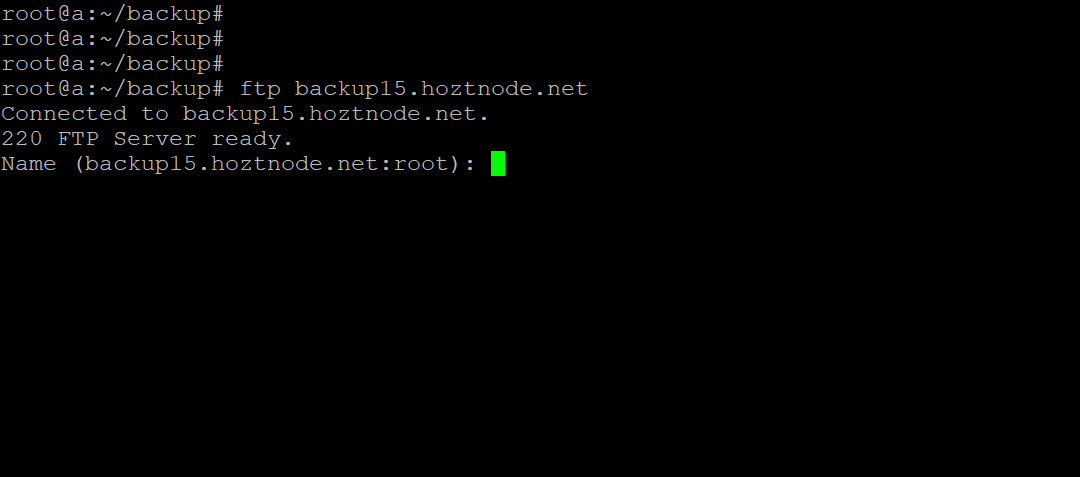

Для подключения в терминале введите команду:

ftp [адрес хранилища]

Система запросит у вас имя и пароль FTP-пользователя:

Получать файлы с помощью wget и sftp

Привет, ребята…

У меня есть script, работающий каждую ночь на сервере linux, который намерен получить файлы от другого, используя протокол wget и ftp. Эти файлы находятся под папкой, доступ к которой невозможен через HTTP.

Здесь используется командная строка:

wget --directory-prefix=localFolder ftp://login:[email protected]/path/*

Доступ к сайту был изменен на SFTP. Я хотел бы изменить script, чтобы иметь возможность получать файлы так же, как и раньше, но не удается сделать это с помощью SFTP.

Я попытался создать безопасный ключ, используя ssh-keygen, а затем скопировал его на сервер, к которому я хотел получить доступ, но он этого не сделал, или мне просто не удается найти правильный способ сделать это…

Спасибо за вашу помощь!:)

Работа с консольным клиентом ftp

ftp — консольный клиент для работы с файлами по протоколу FTP. Доступен для установки в каждом дистрибутиве операционных систем семейства Linux:

| Ubuntu и Debian | CentOS |

-- установлен по умолчанию -- apt-get update apt-get install ftp | yum install ftp |

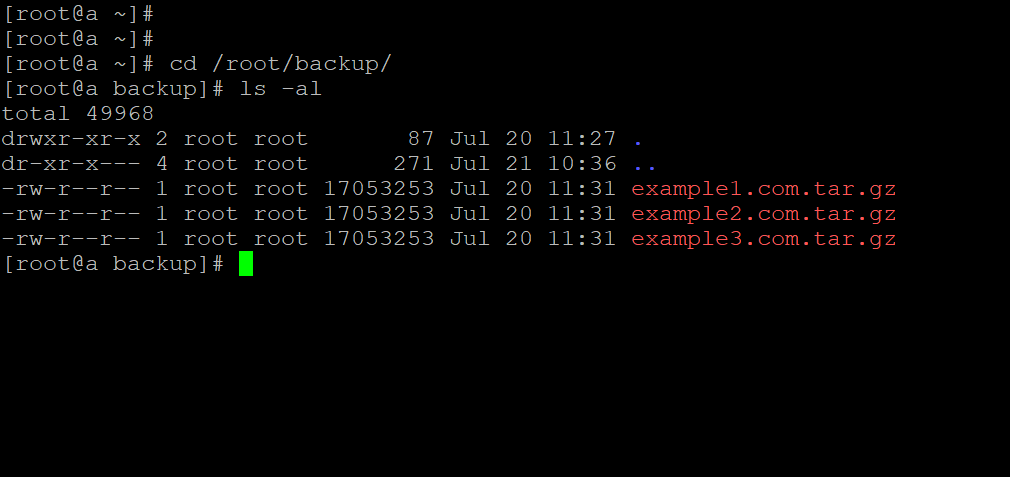

Предположим, что у нас есть несколько резервных копий в каталоге /root/backup/ Перед началом работы с хранилищем нужно перейти в него с помощью команды:

cd /root/backup/

Таким образом после подключения к хранилищу мы сможем работать с файлами этого каталога без лишних действий.

Управление файлами

cURL может передавать команды FTP-серверу хранилища, тем самым позволяя управлять папками и файлами:

Создать папку

backup/на FTP-хранилище можно командой:curl -u ftp_login:ftp_password ftp://ftp.storage.address/ -Q '-MKD backup'

Удалить папку

backup/можно командойcurl -u ftp_login:ftp_password ftp://ftp.storage.address/ -Q '-RMD backup'

Переименовать файл

filenameвnew-filenameможно так:curl -u ftp_login:ftp_password ftp://ftp.storage.address/backup/ -Q '-RNFR filename' -Q ‘-RNTO new-filename’

Удалить файл

filenameиз папкиbackup/хранилища можно следующим образом:curl -u ftp_login:ftp_password ftp://ftp.storage.address/backup/ -Q '-DELE filename'

9 appendices

This chapter contains some references I consider useful.

Addendum: how to use this license for your documents

To use this License in a document you have written, include a copy of

the License in the document and put the following copyright and

license notices just after the title page:

If you have Invariant Sections, Front-Cover Texts and Back-Cover Texts,

replace the “with…Texts.” line with this:

If you have Invariant Sections without Cover Texts, or some other

combination of the three, merge those two alternatives to suit the

situation.

If your document contains nontrivial examples of program code, we

recommend releasing these examples in parallel under your choice of

free software license, such as the GNU General Public License,

to permit their use in free software.

Вход в личный кабинет

Вход в личный кабинет